Presently sponsored by: Twilio: Learn what regulations like PSD2 mean for your business, and how Twilio can help you achieve secure, compliant transactions

Do you ever hear those stories from your parents along the lines of “when I was young…” and then there’s a tale of how risky life was back then compared to today. You know, stuff like having to walk themselves to school without adult supervision, crazy stuff like that which we somehow seem to worry much more about today than what we did then. Never mind that far less kids go missing today than 20 years ago and there’s much less chance of them being hit by a car, circumstances are such today that parents are more paranoid than ever.

The solution? Track your kids’ movements, which brings us to TicTocTrack and the best way to understand their value proposition is via this news piece from a few years ago:

Irrespective of what I now know about the product and what you’re about to read here, this sets off alarm bells for me. I’ve been involved with a bunch of really poorly implemented “Internet of Things” things in the past that presented serious privacy risks to those who used them. For example, there was VTech back in 2015 who leaked millions of kids’ info after they registered with “smart” tablets. Then there was CloudPets leaking kids voices because the “smart” teddy bears that recorded them (yep, that’s right) then stored those recordings in a publicly facing database with no password. Not to mention the various spyware apps often installed on kids’ phones to track them which then subsequently leak their data all over the internet. mSpy leaked data. SpyFone leaked data. Mobiispy leaked data. And that’s just a small slice of them.

And then there’s kids’ smart watches themselves. A couple of years back, the Norwegian Consumer Council discovered a whole raft of security flaws in a number of them which covered products from Gator, GPS for barn and Xplora:

These flaws included the ability for “a stranger [to] take control of the watch and track, eavesdrop on and communicate with the child” and “make it look like the child is somewhere it is not”. These issues (among others), led the council’s Director of Digital Policy to conclude that:

These watches have no place on a shop’s shelf, let alone on a child’s wrist.

Referencing that report, US Consumer groups drew a similar conclusion:

US consumer groups are now warning parents not to buy the devices

The manufacturers fixed the identified flaws… kind of. Two months later, critical security flaws still remained in some of the watches tested, the most egregious of which was with Gator’s product:

Adding to the severity of the issues, Gator Norge gave the customers of the Gator2 watches a new Gator3 watch as compensation. The Gator3 watch turned out to have even more serious security flaws, storing parents and kids’ voice messages on an openly available webserver.

Around a similar time, Germany outright banned this class of watch. The by-line in that piece says it all:

German parents are being told to destroy smartwatches they have bought for their children after the country’s telecoms regulator put a blanket ban in place to prevent sale of the devices, amid growing privacy concerns.

Wow – destroy them! The story goes on to refer to the German Federal Network Agency’s rationale which includes the fact that “parents can use such children’s watches to listen unnoticed to the child’s environment”. This is a really important “feature” to understand: these devices aren’t just about tracking the kids whereabouts, they’re also designed to listen to their surroundings… including their voices. Now on the one hand you might say “well, parents have a right to do that”. Maybe so, maybe not, you’ll hear vehement arguments on that both ways. But what if a stranger had that ability – how would you feel about that? We’ll come back to that later.

Around a year later, Pen Test Partners in the UK found more security bugs. Really bad ones:

Guess what: a train wreck. Anyone could access the entire database, including real time child location, name, parents details etc.

This wasn’t just bad in terms of the nature of the exposed data, it was also bad in terms of the ease with which it was accessed:

User[Grade] stands out in there. I changed the value to 2 and nothing happened, BUT change it to 0 and you get platform admin.

So change a number in the request and you become God. This is something which is easily discovered in minutes either by a legitimate tester within the organisation building the software (which obviously didn’t happen) or… by someone with malicious intent. The Pen Test Partners piece concludes:

We keep seeing issues on cheap Chinese GPS watches, ranging from simple Insecure Direct Object Request (IDOR), to this even simpler full platform take over with a simple request parameter change.

Keep that exploit in mind – insecure direct object references are as simple as taking a URL like this:

example.com/get-kids-location?kid-id=27

And changing it to this:

example.com/get-kids-location?kid-id=28

The level of sophistication required to exploit an IDOR vulnerability boils down to being able to count. That was in January this year, fast forward a few months and Ken Munro from Pen Test Partners contacts me. He’s found more serious vulnerabilities with the services these devices use and in particular, with TicTocTrack’s product. He believes the same insecure direct object reference issues are plaguing the Aussie service and they needs someone on the ground here to help establish the legitimacy of the findings.

To test Pen Test Partners’ theory, I decided to play your typical parent in terms of the buying and setup process and use my 6-year old daughter, Elle, as the typical child. She’s smack bang in the demographic of who the watch is designed for and I was happy to give Ken access to her movements for the purposes of his research. So it’s off to tictoctrack.com.au where the site leans on its Aussie origins:

I can understand why companies emphasise the “we host your data near you” mantra, but in practical terms it makes no difference whether it’s in Australia or, say, the US. You’re also often talking about services that are written and / or managed by offshore companies anyway so where the data physically sits really is inconsequential (note: this is assuming no regulatory obligations around co-locating data in the country of origin). The “we take the security of your data seriously” bit, however, always worries me and as you’ll see shortly, that concern is warranted.

The Aussie angle comes up again further down the page too:

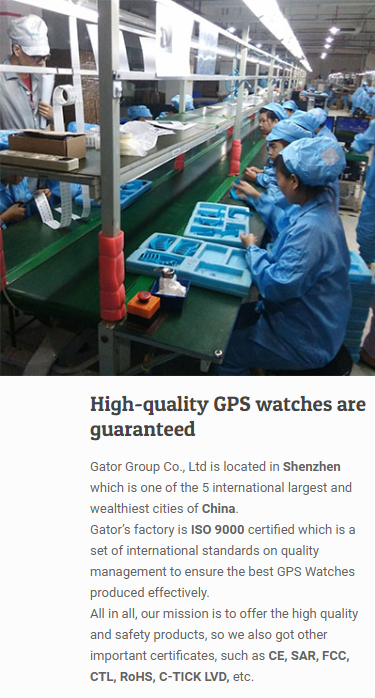

At this point it’s probably worthwhile pointing out that despite the Aussieness asserted on the front page, the origin of the watch isn’t exactly very Australian. In fact, the watch should be rather familiar by now:

So for all the talk of TicTocTrack, the hardware itself is actually Gator. In fact, you can see exactly the same devices over on the Gator website:

It’s not clear how they arrived at the conclusion of “the world’s most reputable GPS watch for kids and elders”, especially given the earlier findings. And who is Gator? They’re a Chinese company located in Shenzhen:

The country of origin would be largely inconsequential were it not for TicTocTrack’s insistence on playing the Aussie card earlier on. It’s also relevant in light of the embedded media piece at the start of this blog post: this isn’t “a new device developed by a Brisbane mother” nor is the mother “the creator of the watch”. In fairness to Karen Cantwell, it wasn’t her making those claims in the story and the media does have a way of spinning things, but it’s important to be clear about this given how this story unfolds from here.

Regardless, let’s proceed and actually buy the thing. I get Elle involved and allow her to choose the colour, with rather predictable results:

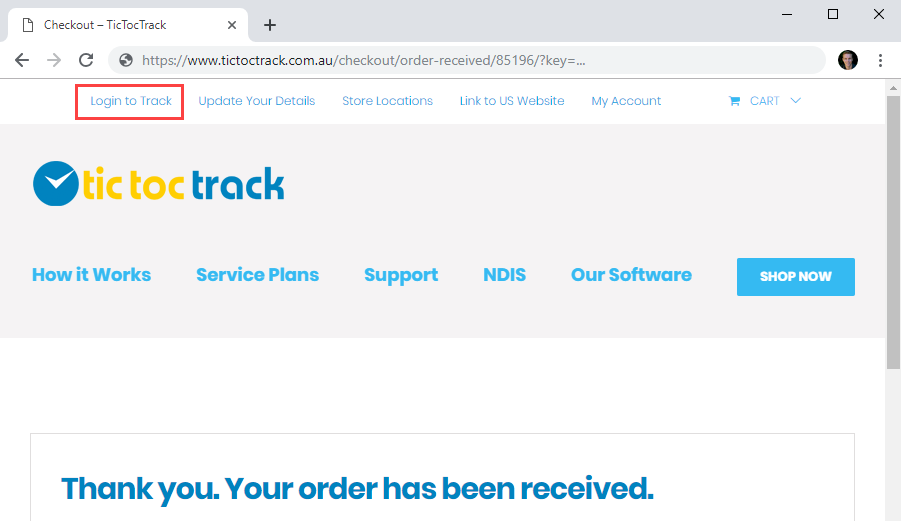

The terms and conditions were actually pretty light (kudos for that!) but the link to the privacy and security policies was dead. I go through the checkout process and buy the watch:

iStaySafe Pty Ltd is the parent company and we’ll see that name pop up again later on. An email promptly arrives with a receipt and a notice about the order being processed, albeit without a delivery time frame mentioned. With time to kill, I decide to poke around and take a look at how the tracking works, starting with the link below:

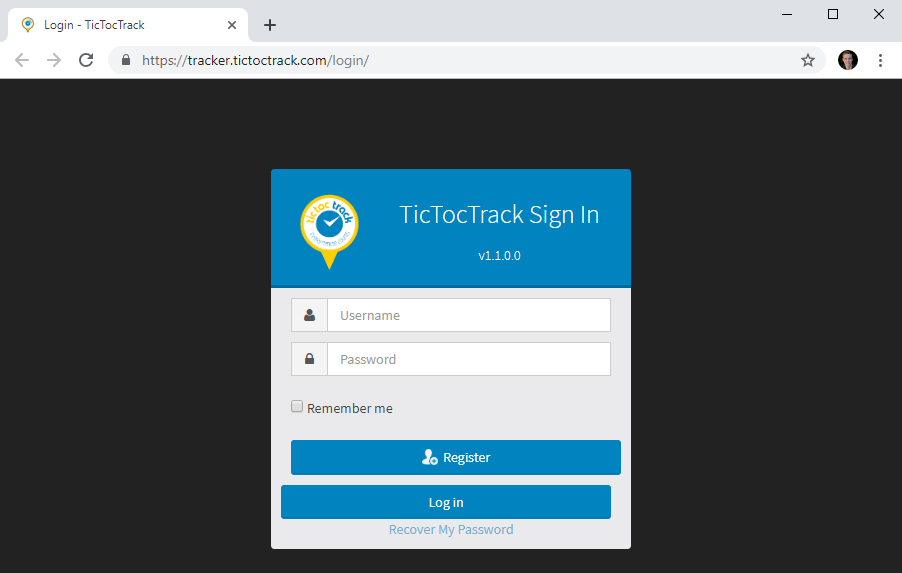

Turns out the tracking app is a totally different website running on a totally different hosting provider in a totally different state:

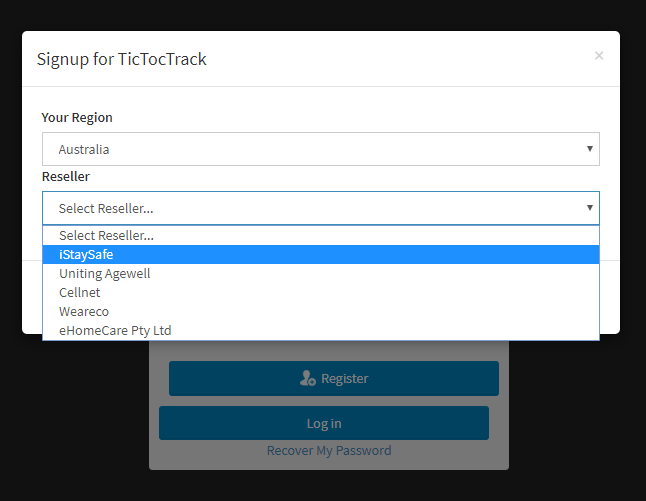

The primary site is down in Melbourne whilst the tracking site is in Brisbane per the info on the front page. My credentials from the primary site don’t work there and registering results in me needing to choose a reseller:

Here we see iStaySafe again, but it’s the other resellers (all Aussie companies) that help put the whole Gator situation in context. Uniting Agewell provides services to the elderly and when considering the nature of the Gator watch, it made me think back to a comment on the Chinese manufacturer’s website: “the world’s most reputable GPS watch for kids and elders”. Cellnet is a publicly listed company with a heap of different brands. Weareco produces uniforms. eHomeCare provides “smart care technology for healthy ageing” and their product page on the GPS tracking watch explains the relationship:

As it turns out, attempting to sign up just boots me back to the TicTocTrack website so I assume I just need to wait for the watch to arrive before going any further. Still, this has been a useful exercise to understand not just how the various entities relate to each other, but also because it shows that the scope of this issue isn’t just constrained to kids, it affects the elderly too.

A few days later, this lands in the mail:

I’m surprised by how chunky it is – this is a big unit! For context, here it is next to my series 4 Apple Watch (44mm – the big one):

I’m not exactly expecting Apple build quality here (and as you can see from the pic, it’s a long way from that), but this is a lot to put on a little kid’s wrist. You can see the access port for the physical SIM card (more on that later), as opposed to Apple’s eSIM implementation so it’s obviously going to consume a bunch of space when you’re building a physical caddy into the design to hold a chip on a card.

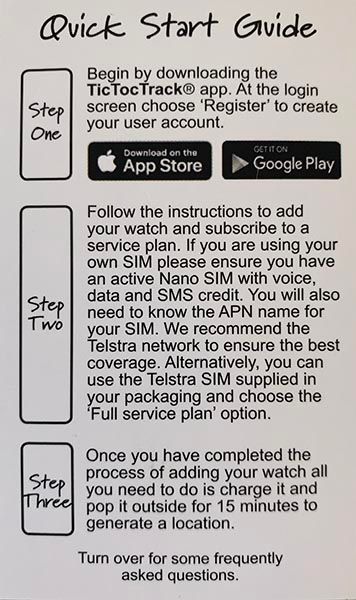

Regardless, let’s get on with the setup process and I’m going to be your average everyday parent and just follow the instructions:

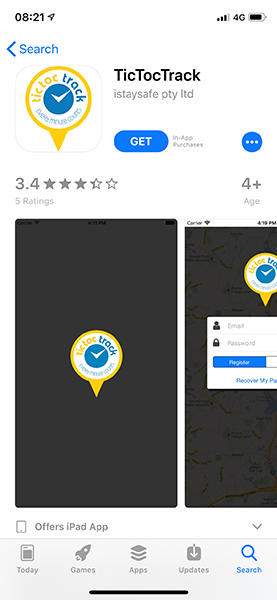

The app is branded TicTocTrack and is published by iStaySafe:

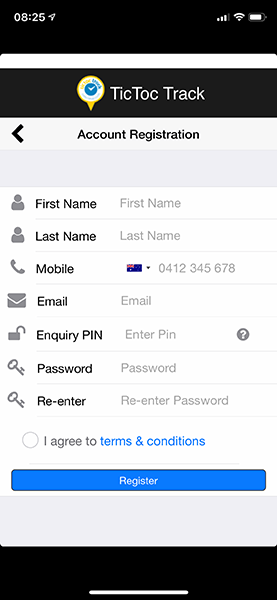

Popping it open, the first step is registration (the mobile number is a pre-filled placeholder):

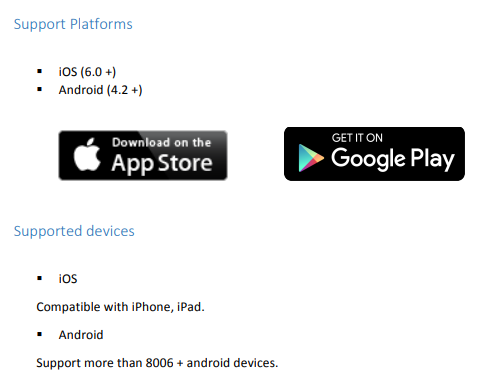

I’m surprised by the empty space at the top and the bottom – just which generation of iPhone was this designed for? Certainly not the current gen XS, does that resolution put it back in about the iPhone 5 era from 2012? That’d be iOS 6 days which their user manual seems to suggest:

Whilst the aesthetics of the app might seem inconsequential, I’ve always found that it’s a good indicator of overall quality and is often accompanied by shortcomings of a more serious nature. It’s the little things that keep popping up, for example the language and grammar in the aforementioned user manual. Why is it “Support Platforms” and then “Supported devices”? And why is the opening sentence of the doc so… odd?

Welcome to TicTocTrack® User Manual! You are about to begin your journey with the live tracking with your family.

That sort of language appears every now and then, for example in the password reset section:

If you forget your password, please use web portal to obtain new password.

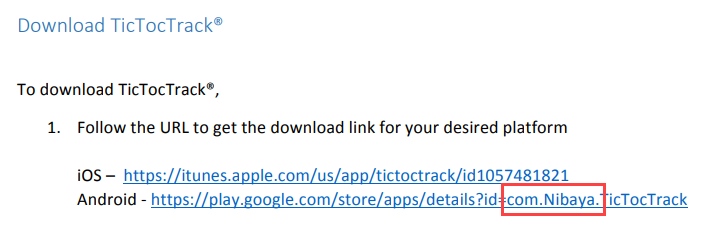

It has me wondering how much of this was outsourced overseas and again, that wouldn’t normally be worth mentioning were it not for the emphasis placed on the Aussie origins of the service (I know, despite it being a Chinese watch). The actual origins of the service become clear once you look at the download links for the app:

Searching for that same “Nibaya” name on the TicTocTrack website turns up several different versions of the user manual:

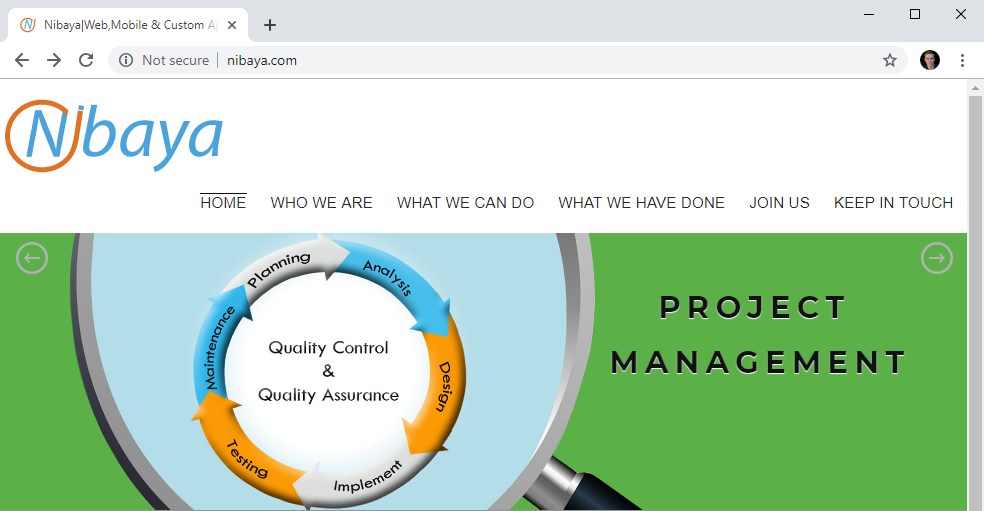

It turns out that Nibaya is a Sri Lankan software development company with a focus on quality control and quality assurance:

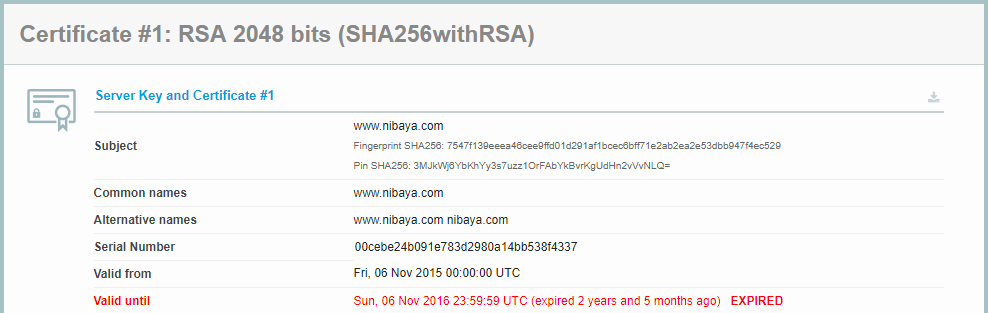

We’re also told by the browser that they’re “Not secure” which is not a great look in this day and age. They do in fact have a certificate on the site, only thing is it expired two and a half years ago and they haven’t bothered to renew it:

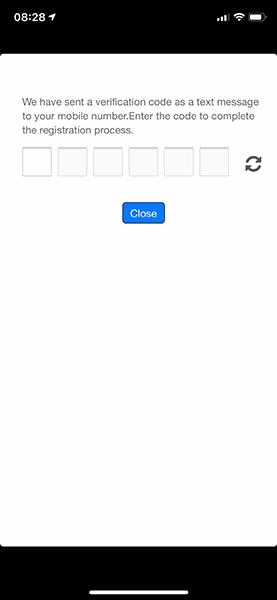

Moving on, there’s a mobile phone number verification process which sends an SMS to my device:

Only thing is, the keyboard defaults back to purely alphabetical after every character is typed so unless you pre-fill the field from the SMS (which iOS natively allows you to do), it’s a bit painful. Again, it’s all the little things.

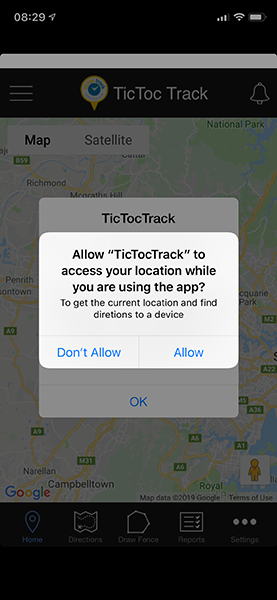

Following successful number verification, the app fires up and asks for access to location data:

Based on what I’d already read in the user manual, my location data can be used to direct me to a child wearing the watch so requesting this seems fine for that feature to function correctly.

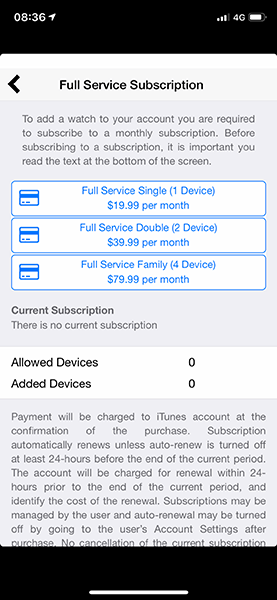

Next is the money side of things and we’re looking at $ 20 a month for the “Full Service Subscription”:

If I’m honest, I’m still a bit confused about what this entails. Is this for the tracking service? Or for the Telstra SIM which it shipped with and is identically priced?

Or is it for both? I’m assuming both but then when I look at the service plans on the website, none of them are priced at $ 19.99. Regardless, I take the $ 20 option and move on:

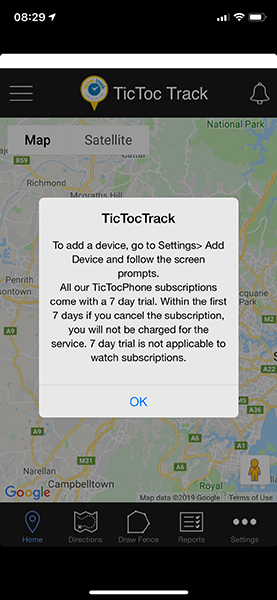

The adding a device bit I get – I’m going to need to pair the watch – but the subscription bit further confuses me because I’ve literally just bought a subscription on the previous screen! For my purposes I don’t see myself needing it for any more than 7 days anyway so I’m not too concerned, let’s go and add that new device:

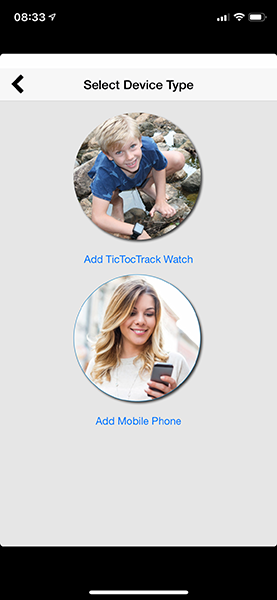

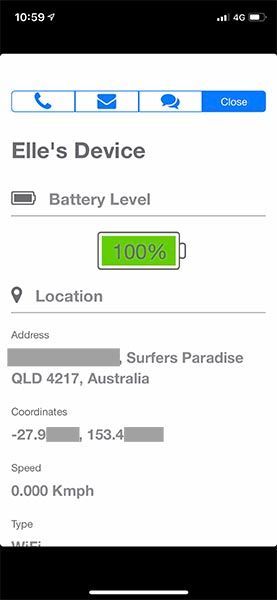

A new TicTocTrack watch it is:

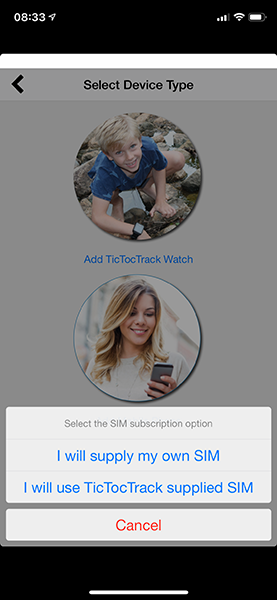

And let’s go with the supplied SIM which then leads us to the device and SIM registration page:

The IMEI is the identifier of the device itself (the watch) and that can be scanned off the barcode in the packaging. The SIM ID relates to the pre-packaged SIM from Telstra, the barcode for which is under one of the grey obfuscation boxes in the earlier image. I call the device “Elle”, register it and that’s that.

Lastly, I insert the SIM into the watch (the metal flap for which opens in the opposite direction to the video tutorial and took me a good 5 minutes to work out for fear of breaking it), then drop it onto the power. Give it a couple of hours to charge, boot it up and shortly afterwards it’s showing a 3G connection:

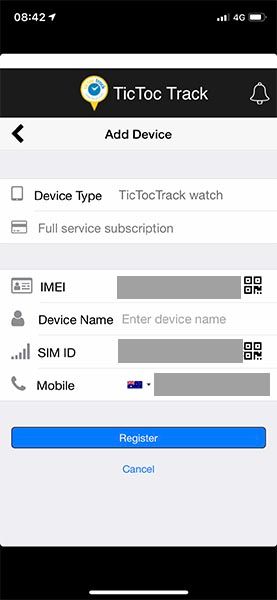

I give it a little time to sync to the TicTocTrack service then successfully find it in the app:

Drilling down on Elle’s profile, I get an address and GPS coordinates which are both pretty accurate:

To its credit, the watch does a pretty good job of the setup and tracking process once you’re past some of the earlier hurdles. At this stage, I now have a device which is broadcasting its location reliably and I can successfully see it in the app. I’m not going to go through other features such as the ability to send an SOS or make a call, at this stage all I really care about is that the watch is now tracking her movements.

The next day, we head off to tennis camp (it’s school holiday time) with the TicTocTrack / Gator on her wrist:

She isn’t aware of why she has the watch, to her it’s just a new cool thing she gets to wear. And it’s pink so that’s all boxes ticked. She’s now at the local court whilst I (in my helicopter parent mode), am sitting at home watching her location on my device:

Safe in the knowledge that my little girl is in a place that I trust, I get back to work. But someone else is also watching her location, someone on the other side of the world who is now able to track her every move – it’s Ken. Not only is Ken watching, as far as TicTocTrack is concerned he’s just taken her away:

She’s no longer playing tennis, she’s now in the water somewhere off Wavebreak island. This isn’t a GPS glitch; Ken has placed her four and a half kilometres away by exploiting an insecure direct object reference vulnerability in TicTocTrack’s API. He’s done this with my consent and only to my child, but you can see how this could easily be abused. It’s not just the concept of making someone’s child appear in a different location to what the parents expect, you could also have them appear exactly where the parents expect… when they’re actually nowhere near there.

But these devices are about much more than just location tracking, they also enable 2-way voice communications just as you’d have on a more traditional cellular phone. This, in turn, introduces a far creepier risk – that unknown parties may be able to talk to your kids. In order to demonstrate this, I put the watch back on Elle and gave Pen Test Partners permission to contact her. Pay attention to how much interaction is required on her part in order for a stranger to begin talking to her simply by exploiting a vulnerability in the TicTocTrack service:

Even for me, that video is creepy. It required zero interaction because Vangelis was able to add himself as a parent and a parent can call the device and have it automatically answer without interaction by the child. The watch actually says “Dad” next to a little image of a male avatar so a kid would think it was their father calling them:

This is precisely what the Germans were worried about when they banned the watches outright and when you watch that video, it seems like a pretty good move on their part.

The exploits go well beyond what I’ve already covered here too, for example:

That link goes off to a Facebook post by an account called Travelling with Kids which very enthusiastically espouses the virtues of tracking them (it’s not explicitly said, but the post appears to be promotional in nature):

The little wanderers were stoked to be going off to kids club at the Hard Rock Hotel Bali We have complete peace of mind knowing they’re wearing their TicTocTrack watches, so they can call us at anytime and with GeoFencing we know their location

By now, I’m sure you can see the irony in the “peace of mind” statement.

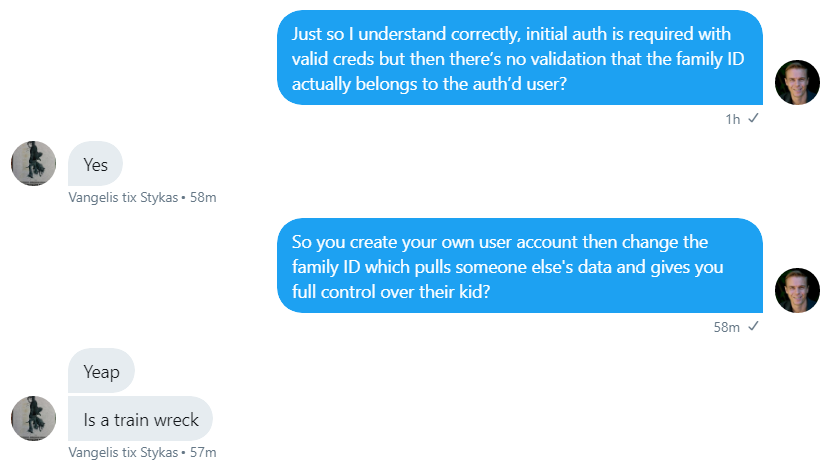

The technical flaws go much further than this but rather than covering them here, have a read of the Pen Test Partners write-up which includes details of the IDOR vulnerability. Just to put it in layman’s terms, here’s the discussion I had with Vangelis about it:

Being conscious that many people who don’t normally travel in information security circles will read this, handling a vulnerability of this nature in a responsible fashion is enormously important. Obviously you want to remove the risk ASAP, but you also want to make sure that information about how to exploit it isn’t made public beforehand. We religiously followed established best practices for responsible disclosure, here’s the timeline with dates being local Aussie ones for me:

- Saturday 6 April: Ken first contacts me about the watch. I order one that morning.

- Tuesday 9 April: Watch arrives.

- Wednesday 10 April: I set the account up.

- Thursday 11 April: Elle wears the watch to tennis and we test “relocating” her.

- Friday 12 April: Vangelis calls her and has the discussion in the video above. Ken privately discloses the vulnerability to TicTocTrack support that night.

- Monday 15 April (today): TicTocTrack takes the service offline.

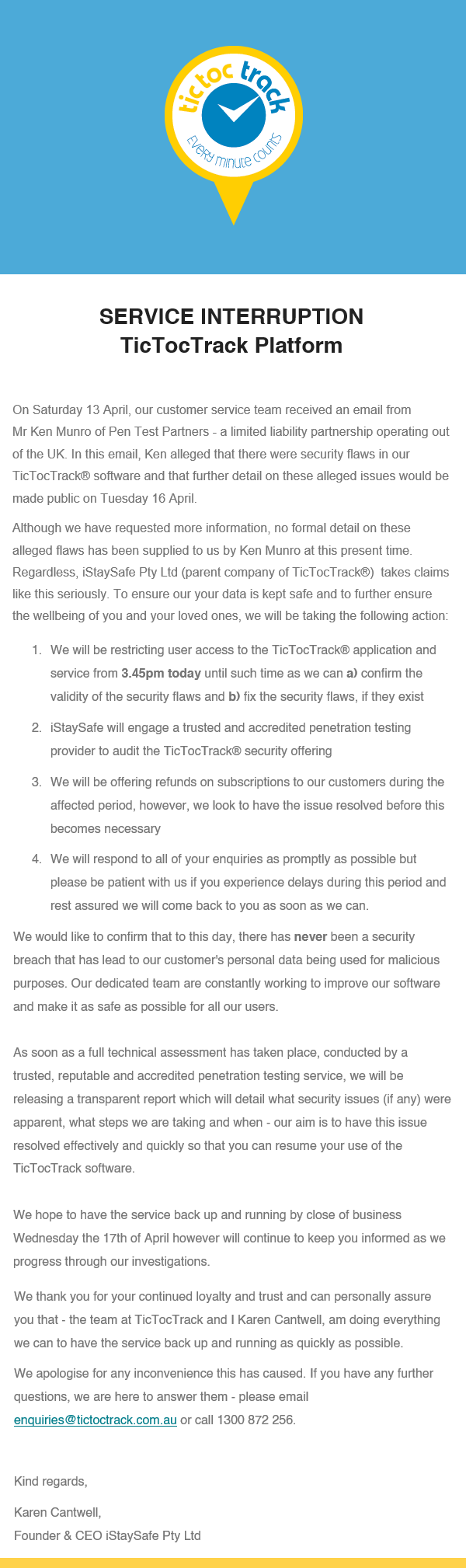

A couple of hours before publishing, I received a notification to the email address I signed up with as follows:

I’m in 2 minds about this message: on the one hand, they took the service down as fast as we could reasonably expect, being within a single business day so kudos to them on that. On the other hand, the messaging worries me in a number of ways:

Firstly, Ken didn’t just “allege” that there were security flaws, he spelled it out. His precise wording was “The service fails to correctly verify that a user is authorised to access data, meaning that anyone can access any data, should they so wish”. Anyone testing for a flaw of this nature would very quickly establish that changing a number in the request would hand over control of someone else’s account thus proving the vulnerability beyond any shadow of a doubt. That word was used 3 times in the statement and it implies that they’re unsubstantiated claims; they’re clearly not. Which brings me to the next point:

Secondly, it wouldn’t make sense to pull down the entire service if you weren’t convinced there was a serious vulnerability. Many people allege there are security flaws in services but they don’t generally go offline until they’re proven. Clearly an incident like this has a bunch of downstream impact and acknowledging it publicly is not something you do on a whim. Either TicTocTrack was very confident in that accuracy of Ken’s report (well beyond what “alleged” implies) or there were other factors I’m not aware of that drove them to rapidly pull the service.

Thirdly, the following statement was made without citing any evidence: “there has never been a security breach that has lead to our customer’s personal data being used for malicious purposes”. It’s not uncommon to see a response like this following a security incident, but what it should read is “we don’t know if there’s ever been a security breach…” This vulnerability relied on an authenticated user with a legitimate account modifying a number in the request and the likelihood of that being logged in a fashion sufficient enough to establish it ever happened is extremely low. And if you were the kind of developers to log this sort of information, you’d also be the kind not to have the vulnerability in the first place!

Let’s be perfectly clear – this is just one more incident in a series of similar ones impacting kids tracking watches and Gator in particular. What’s infuriating about this situation is that not only do these egregiously obvious security flaws keep occurring, they’re just not being taken seriously enough by the manufacturers and distributors when they do occur. There’s no finer illustration of this than the statement Ken got when speaking to an agent over in his corner of the world:

UK agent for Gator said that they didn’t have the money for security, as otherwise they couldn’t afford a staff Xmas party

Is that really where we’re at? Tossing up between exposing our kids in this fashion and beers at Christmas? If you’re a parent ever considering buying one of these for your kid, just remember that quote. Inevitably, cost would have also been a major driver for TicTocTrack outsourcing their development to Sri Lanka, indeed it’s something that Nabaya prides itself on:

I want to finish on a broader note than just TicTocTrack or Gator or even smart watches in general; a huge number of both the devices and services I see being marketed either directly at kids or at parents to monitor their kids are absolute garbage in terms of the effort invested in security and privacy. I mentioned CloudPets and VTech earlier on and I also mentioned spyware apps; by design, every one of these has access to data that most parents would consider very personal and, in many cases, (such as the photos older kids are often taking), very sensitive. These products are simply not designed with a security-orientated mindset and the development is often outsourced to cheap markets that build software on a shoestring. The sorts of flaws we’re seeing perfectly illustrate that: CloudPets simply didn’t have a password on their database and both the VTech and TicTocTrack vulnerabilities were as easy as just incrementing a number in a web request. A bunch of the spyware breaches I referred to occurred because the developers literally published all the collected data to the internet for the world to see. How much testing do you think actually went on in these cases? Did nobody even just try adding 1 to a number in the request? Because that’s all Ken needed to do; Ken can count therefore Ken can hack a device tracking children. Maybe I should give Elle a go at that, her counting is coming along quite nicely…

There’s only one way I’d track my kids with GPS and cellular and that’s with an Apple Watch. I don’t mean to make that sound trivial either because we’re talking about a $ 549 outlay here which is a hell of a lot to spend on a kid’s watch (plus you still need a companion iPhone), but Apple is the sort of organisation that not only puts privacy first, but makes sure they actually pay attention to their security posture too. As that Gator agent in the UK well knows, security costs money and if you want that as a consumer, you’re going to need to pay for it.

I’ll leave you with this thread I wrote up when first starting to look at the watch. It got a lot of traction and I’d like to encourage you to share it with your parenting friends on Twitter or via the one I also posted to Facebook.

I've been looking at a bunch of kid-related devices and services lately, mostly relating to how parents can monitor and control their activities. It's just consistently horrifyingly bad; FUD-ridden at best, massive privacy violations at worst (i.e. data accessible to the public).

— Troy Hunt (@troyhunt) April 6, 2019

The problem is that you've got a bunch of technically illiterate parents (understandable) being pushed things by schools that are influenced by marketers (much less understandable) and built with near zero focus on security (inexcusable).

— Troy Hunt (@troyhunt) April 6, 2019

You worried about your kids online? Talk to them. Browse the web with them. Introduce them to the wonders of the web on your terms and *physically* monitor them (you know, like exist together in the same room for a bit).

— Troy Hunt (@troyhunt) April 6, 2019

And accept that they're going to see porn. They're going to swear in chats. They're going to talk to people you don't like. And 90%+ of the time, they're more technically adept than their parents and will know how to hide it and circumvent the parental controls.

— Troy Hunt (@troyhunt) April 6, 2019

I'll talk to my kids all day long about this stuff, but I'll never install the sorts of software or buy the kinds of tracking devices I keep seeing peddled. These things are consistently absolute rubbish and they prey on scared and uninformed parents and teachers to get traction.

— Troy Hunt (@troyhunt) April 6, 2019

Lastly, resources from the big OS vendors to help parents without putting kids even further at risk:

Apple: https://t.co/9lzpSJoH5s

Microsoft: https://t.co/jnQ06xp2L5

Google: https://t.co/jeOdqUz37Y— Troy Hunt (@troyhunt) April 6, 2019